TL;DR:

- Building the wrong SaaS product is costly in money, effort, and opportunity.

- Effective validation requires structured customer discovery, clear hypotheses, and confirmed commercial signals before building.

Building the wrong product is one of the most expensive mistakes a SaaS founder can make. Not just in money, but in months of engineering effort, team morale, and opportunity cost. The CB Insights research on startup failure consistently points to "no market need" as a top cause of failure, and yet founders keep skipping the one thing that could have prevented it: structured validation before committing to a build. This guide gives you a concrete, sequential process for validating your B2B SaaS idea the right way, starting with customer evidence and ending with commercial proof.

Table of Contents

- What you need before starting validation

- Step-by-step guide to validating your SaaS idea

- Common mistakes and how to avoid them

- How to measure validation results and product-market fit

- What most SaaS validation advice misses

- Get expert help to validate and launch your SaaS solution

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Start with customer discovery | Structured conversations with target buyers are the foundation for meaningful validation. |

| Test willingness to pay | Aim for commercial signals like LOIs or prepayments, not just verbal interest. |

| Use lean validation experiments | Focus on testing your riskiest assumptions with the simplest possible experiments. |

| Measure product-market fit | Use benchmarks like the 40% 'very disappointed' rule to confirm strong demand. |

| AI is a tool, not a shortcut | AI can accelerate research but can't replace direct customer evidence or commitment. |

What you need before starting validation

Validation done poorly is almost as dangerous as skipping it entirely. Running a quick Twitter poll or asking a few friends whether they'd use your product gives you false confidence. Real validation starts with preparation.

The foundation is structured product discovery: a disciplined process for learning what problems your target buyers actually have, how they currently solve them, and what they'd genuinely pay to fix. As Forbes notes, you should validate external opportunity through structured customer discovery before ever touching a technical solution.

Before you run a single customer interview, you need three things in place:

- A specific customer profile. Not "SMB owners" or "HR teams." A precise archetype: company size, industry, job title, and the context in which they face the problem you're solving. The more specific, the more useful your interviews will be.

- A written value hypothesis. One or two sentences stating what you believe to be true: who has the problem, how painful it is, and why your solution would be meaningfully better than alternatives. Write this down before talking to anyone, so you can test it rather than subconsciously fishing for confirmation.

- Basic competitive mapping. Know who else is solving this problem, even partially. This shapes your interview questions and helps you understand whether buyers are actively looking for alternatives or already satisfied with their workarounds.

The tools you need at this stage are deliberately simple. Use a spreadsheet to track interview contacts and outcomes. Use a structured note-taking template to capture verbatim quotes during calls. Use a lightweight synthesis tool to cluster findings by theme. You do not need a $500/month research platform.

| Validation stage | Recommended tool | Purpose |

|---|---|---|

| Contact sourcing | LinkedIn Sales Navigator or Apollo | Identify and reach target buyers |

| Interview scheduling | Calendly | Remove friction from booking |

| Note capture | Notion or structured Google Doc | Consistent, searchable records |

| Insight synthesis | Affinity mapping in Miro | Cluster themes across interviews |

| No-code prototype | Webflow or Carrd | Landing page smoke tests |

AI in SaaS idea validation can genuinely accelerate the research and synthesis phases. Tools built on large language models can summarize interview transcripts, surface recurring themes, and even generate initial market segmentation hypotheses. But AI cannot replace direct customer evidence. It can tell you what patterns exist in your data. It cannot tell you whether someone will actually pay.

Pro Tip: Do not provision any cloud infrastructure, hire a developer, or write a line of code until you have completed at least eight to ten structured customer interviews with your exact target buyer profile. The data from those conversations is worth more than any prototype.

Step-by-step guide to validating your SaaS idea

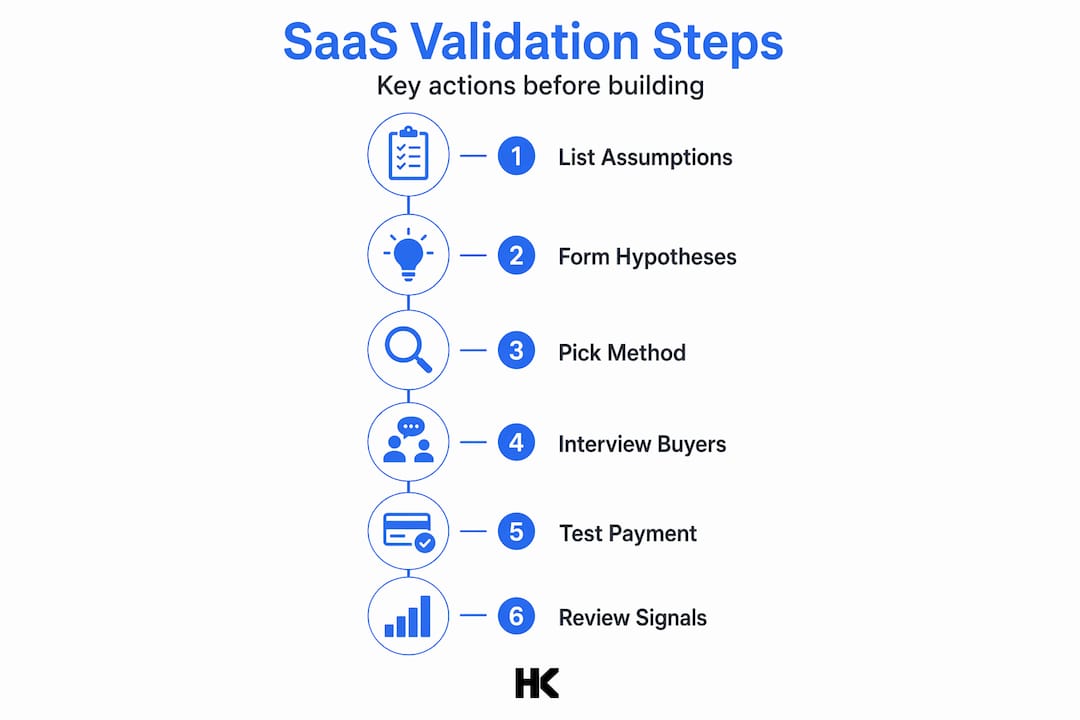

With your prerequisites in place, follow these sequential steps to avoid common SaaS validation pitfalls.

Step 1: List and rank your riskiest assumptions.

Every SaaS idea rests on a stack of assumptions. Some are low-stakes: your UI will be usable, onboarding will take less than 30 minutes. Others are existential: the problem is painful enough to spend budget on, buyers can switch from their current tool, the person feeling the pain also controls the buying decision. Write every assumption down, then rank them by how wrong you'd be if they turned out to be false. Start testing the ones at the top.

Step 2: Turn each assumption into a falsifiable hypothesis.

A falsifiable hypothesis has a clear prediction and a measurable outcome. "HR managers at companies with 100 to 500 employees spend more than four hours per week on compliance reporting and would pay at least €200/month to eliminate that work" is testable. "HR people want better compliance tools" is not. Define your success metric before you gather data, not after. This is what makes lean startup methodology actually work: falsifiable assumptions, pre-committed decision rules, and the cheapest possible test.

Step 3: Choose your validation method.

Different assumptions require different tests. Not every signal is equally strong.

| Method | What it tests | Strength of signal | Time to result |

|---|---|---|---|

| Problem interviews | Problem existence and pain severity | Medium | 2 to 4 weeks |

| Landing page smoke test | Interest and messaging resonance | Low to medium | 1 to 2 weeks |

| Concierge MVP | Willingness to engage with your process | Medium | 3 to 6 weeks |

| Payment pilot | Willingness to pay | High | 4 to 8 weeks |

| Letter of Intent (LOI) | Commercial commitment from buyer | Very high | 2 to 6 weeks |

Step 4: Run structured customer interviews.

Aim for at least ten conversations with buyers who match your exact customer profile. Use open-ended questions. Ask about past behavior, not hypothetical future behavior. "Tell me about the last time this problem cost you real time or money" gives you far better data than "would you use a product that did X?" Record every session with permission, and review transcripts for language your target buyers use naturally. That language belongs in your positioning.

You can validate your SaaS idea at multiple levels, but the interview phase is where you decide whether to proceed to any form of technical test at all.

Step 5: Seek commercial commitment before building.

B2B validation should test willingness to pay explicitly, not just user interest. The standard approach is asking for a Letter of Intent from buyers who say they're interested. An LOI is not a purchase order, but it requires the buyer to go on record. That friction filters out polite enthusiasm from genuine demand. Some founders go further and run a payment pilot at a steep discount, collecting actual money for a solution they then deliver manually or with existing tools.

Step 6: Set your decision rules before you analyze results.

Commit in advance to what success looks like. "If seven out of ten interviewees confirm the problem is a top-three priority and three out of ten sign an LOI, we proceed to MVP build" is a real decision rule. Without this, founders rationalize ambiguous results into permission to keep building. Review your strategic SaaS validation criteria before the data comes in, not after.

Pro Tip: Real proof comes from commercial signals. Fifty people signing up for your email list means your landing page headline works. Three buyers signing an LOI means you have a business hypothesis worth testing with code.

Common mistakes and how to avoid them

Even with the right steps, common traps catch many founders. Here's how to sidestep them.

- Building before validating. This is the most common mistake and the most expensive. Founders with technical skills are especially vulnerable because building feels like progress. It is not validation.

- Confusing excitement with intent. A buyer who says "this is exactly what we need" and a buyer who signs an LOI are not the same person. Positive conversations feel great but validated learning requires pre-committed decision rules, not accumulated enthusiasm.

- Testing the wrong assumptions. Founders often spend weeks optimizing their onboarding flow or feature list when they haven't yet confirmed the core problem exists at scale. Test existential assumptions first, everything else second.

- No exit criteria. Without a pre-defined "kill" threshold, founders persevere through failed validation indefinitely. Decide upfront: if you reach X interviews with fewer than Y commercial signals, you stop or pivot.

- Asking hypothetical questions. "Would you use this?" is not data. "How much did you spend last quarter trying to solve this?" is data. Structure your interview guide around past behavior.

"An MVP is a test, not your final product. Treat it as the cheapest experiment that can disprove your riskiest assumption, not as the first version of what you'll ship to paying customers."

The shortcut that works: ask for something uncomfortable early. Invite your three most enthusiastic interview subjects to pay a nominal amount or sign an LOI within the first two weeks of conversations. The response rate is your real signal. This approach helps you reduce SaaS MVP risk before you've written a line of code, and it's a strategy that also applies when you're deciding whether to launch your MVP fast without a technical co-founder on board.

How to measure validation results and product-market fit

Once you've run your tests, here's how to know if you're onto something real or need to pivot.

Start with your interview data. Tally the number of respondents who confirmed the problem as a top-three priority, the number who could name a specific budget for solving it, and the number who took a commercial action such as signing an LOI or joining a paid pilot. These three numbers give you a quick signal-strength score.

The most widely used benchmark for product-market fit comes from Sean Ellis. His methodology asks active users one question: "How would you feel if you could no longer use this product?" The 40% PMF benchmark holds that if 40% or more of your active users answer "very disappointed," you have achieved meaningful product-market fit. Below 25%, you likely have a retention problem. Between 25% and 40%, you're close but not there yet.

Signal strength at a glance

| Signal type | Strength | What it means |

|---|---|---|

| Interview: "This is interesting" | Very weak | Keep exploring, no commitment |

| Email list signup | Weak | Messaging resonated, no demand proof |

| Demo request | Moderate | Active interest, still no commercial signal |

| Signed LOI | Strong | Buyer is on record with intent to pay |

| Paid pilot or prepayment | Very strong | Commercial demand confirmed |

| 40%+ "very disappointed" response | Strong PMF | Retention and fit are validated |

What strong validation looks like in B2B SaaS: you have three or more signed LOIs from buyers who match your exact customer profile, at least one of them has paid something (even a token amount), and your demo-to-pilot conversion rate is above 30%. If you're below these thresholds, you have interesting data but not a validated business case.

Use your MVP-PMF checklist to track these signals systematically across your validation sprints. The goal is not to feel confident. The goal is to have evidence that would convince a skeptical investor or co-founder.

What most SaaS validation advice misses

Most validation guides focus on frameworks and tools. Few of them address the deeper pattern that causes B2B SaaS founders to get stuck even after following the steps correctly.

Here is the uncomfortable truth: the hardest part of validation is not running interviews or building a smoke test. It is maintaining intellectual honesty when the data is ambiguous. Founders who want their idea to work will find a way to interpret weak signals as confirmation. This is not a process failure. It is a psychology failure.

AI makes this worse, not better. I've seen founders use AI-powered survey tools to run automated validation studies, then cite the results as market proof. A 73% "interested" rate from a synthetic survey is not validation. It is a well-formatted illusion. AI can accelerate research synthesis and prototype generation, but it cannot replicate the friction of asking a real buyer to sign their name to a commitment.

The other thing most advice misses: B2B validation takes longer than B2C, and that is by design. Enterprise and mid-market buyers have procurement cycles, legal reviews, and budget constraints. A founder who completes ten interviews in two weeks and expects LOIs in week three is working on consumer timelines. Give yourself six to ten weeks for a complete B2B validation cycle, and build that into your runway planning.

"A pile of no-code prototypes or 100 upvotes on Product Hunt isn't validation. LOIs and purchase orders are."

The founders who get this right share one trait: they are more interested in being wrong quickly than being right eventually. They want to find the fatal flaw in their hypothesis before it costs them a year of their life. That mindset, combined with a disciplined process and a clear product strategy for SaaS, is what separates validated SaaS businesses from expensive learning experiences.

Get expert help to validate and launch your SaaS solution

Validation frameworks are clear on paper. Executing them under time pressure, with limited customer access and no prior B2B SaaS experience, is a different challenge entirely. That's where an experienced builder makes the difference.

At hanadkubat.com, I offer fixed-price strategy sprints at €1,500 specifically designed to help non-technical founders and early-stage teams scope and validate their ideas before committing to a full build. If validation goes well, I ship production-ready MVPs in 4 to 12 weeks starting from €18,000, with EU AI Act compliance and GDPR-aware architecture built in from day one. You work directly with the person writing the code. No project managers, no junior teams, no surprises. If your idea is ready to test, let's run the validation process together.

Frequently asked questions

What is the fastest way to validate a B2B SaaS idea?

Obtain written commitments such as Letters of Intent from your target buyers, proving real commercial interest before you write any code.

How do I know if my SaaS MVP has product-market fit?

Apply the Sean Ellis benchmark: if 40% or more of your active users say they'd be "very disappointed" without your product, you have a strong product-market fit signal.

Does AI replace customer interviews in SaaS validation?

No. AI can compress research and synthesize findings faster, but the core evidence still comes from direct conversations and commercial signals like payments or signed LOIs.

What is the difference between validation and feasibility?

Validation tests whether real market demand exists for your idea. Feasibility is a separate internal check that asks whether you can actually build and deliver the solution at the required cost and quality.