TL;DR:

- Startups often underinvest in testing, risking costly bugs, customer loss, and technical debt. Implementing automated end-to-end, risk-based, and MVP testing strategies enables fast, reliable releases, especially under EU AI Act requirements. Prioritizing early, focused testing fosters sustainable growth and regulatory compliance in AI-driven SaaS development.

Most founders treat testing as the thing you do after the real work is done. That framing is exactly why so many startups ship broken products, lose customers in the first month, and spend more on emergency fixes than they ever would have on prevention. The role of testing in startups is not a quality checkbox — it is the mechanism that lets you move fast without accumulating the kind of technical debt that eventually stops you cold. For DACH B2B SaaS teams building AI features under the EU AI Act's tightening deadlines, the stakes are even higher.

Table of Contents

- Why startups often underestimate the role of testing

- Key testing strategies for B2B SaaS startups focusing on AI and MVPs

- Navigating EU AI Act compliance through effective testing and documentation

- Deep insights: common pitfalls and expert testing guidance for startup AI and MVP development

- Comparing testing investment approaches: in-house, outsourced, and AI-automated models for startups

- Why the smartest startups test early and automate wisely

- Explore tailored testing solutions for your startup with Hanad Kubat

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Testing saves time and money | Early and automated testing prevents costly production bugs, saving startups from expensive fixes and delays. |

| Automated E2E tests pay back fast | Automated end-to-end testing offers ROI within months and drastically reduces user-impacting bugs over the first year. |

| EU AI compliance demands thorough testing | Startups with high-risk AI must document bias, accuracy, and robustness testing to comply with the EU AI Act by August 2026. |

| Start small, prioritize high-risk areas | Focus testing efforts on critical features and known bug-prone flows to maximize coverage with limited resources. |

| Smart testing fuels sustainable speed | Contrary to myth, systematic testing enables startups to move fast continuously without breaking critical functionality. |

Why startups often underestimate the role of testing

The argument against testing feels logical on the surface: you have limited engineers, a tight runway, and a market window that will not wait. So you ship. Testing feels like friction. It slows down feature delivery, adds sprint overhead, and in the early days, the app mostly works anyway.

The reality is that most startups skip testing thinking it speeds them up, but the result is frequent, costly bugs released directly to production. And when those bugs hit, the cost is not just engineering time. It is customer trust, support tickets, churn, and reputation damage that is genuinely hard to recover from.

A few concrete patterns that reveal why this mindset persists:

- Developers at early-stage startups often allocate less than 5% of sprint time to testing

- "We'll add tests later" becomes a permanent state of affairs

- New engineers inherit untested codebases and are afraid to refactor anything

- Production incidents get patched quickly rather than turned into regression tests

"Fixing a bug in production costs up to 30 times more than catching it during development." This single number should reframe every conversation about whether you have time to write tests.

The startup technical delivery challenges that kill promising products are rarely about bad ideas. They are about engineering practices that compound over time until the codebase becomes too fragile to ship new features confidently.

Key testing strategies for B2B SaaS startups focusing on AI and MVPs

Understanding why startups skip testing leads naturally to the question of what to do instead. The good news: you do not need a dedicated QA team or a six-week test infrastructure project to get meaningful coverage.

Here are the testing methodologies for startups that deliver the most value per hour invested:

- Automated end-to-end (E2E) testing for critical paths. Focus on the flows that break your business if they fail: signup, billing, onboarding, and your core AI feature. Automated E2E testing reaches positive ROI in 3 to 6 months and reduces production bugs by 60 to 80% within a year.

- Risk-based testing. Not everything needs equal coverage. Prioritize payment flows, AI scoring and recommendation outputs, and any feature touching user data or permissions. Low-traffic admin pages can wait.

- MVP testing through real-world feedback. Before building full features, beta testing and user interviews validate ideas against real user behavior. A five-person beta cohort will surface more critical issues than a week of internal QA.

- Shift-left testing. Write tests as you build, not after. This is a process change more than a tooling change, and it costs almost nothing once the habit is established.

- Manual testing for exploratory edge cases. Automation catches known failure modes. A thoughtful engineer spending two hours clicking through a new feature before release catches the unknown ones.

The benefits of testing in startups become visible fastest when you validate your MVP with real users before investing heavily in infrastructure. This is especially true for AI features where model behavior in production rarely matches behavior in controlled testing environments.

Pro Tip: Start with five core critical-flow E2E tests built around your most recent production incidents. This immediately covers your highest-risk scenarios without requiring a testing overhaul.

When scoping your first automation pass, the product validation process should directly inform which tests to write first. The features users rely on most are the features that must not break. Your SaaS MVP tech checklist is a useful starting point for identifying those paths systematically.

Navigating EU AI Act compliance through effective testing and documentation

With testing strategies in place, founders building AI features for the DACH market face an additional layer of obligation. The EU AI Act is not theoretical — high-risk AI providers must document testing for bias, accuracy, and robustness by August 2, 2026, and maintain those records for 10 years.

For B2B SaaS companies, this affects more products than most founders realize. HR automation tools, credit scoring, access control systems, and certain education platforms all fall into high-risk categories under Annex III.

What Annex IV documentation requires:

- Test datasets with provenance and selection methodology

- Bias evaluations across gender, age, and ethnicity

- Accuracy and robustness metrics with thresholds

- Performance monitoring design and incident response plans

The fairness evaluation across demographics is the most labor-intensive piece of this documentation. It is not something you can generate retroactively from production logs.

| AI feature type | Risk classification | Key testing obligation | Documentation deadline |

|---|---|---|---|

| HR candidate screening | High-risk | Bias audit across demographics | August 2, 2026 |

| Credit or financial scoring | High-risk | Accuracy + robustness testing | August 2, 2026 |

| Recommendation engine | Limited risk | Explainability traces | Ongoing |

| Internal productivity tool | Minimal risk | Audit logging | Ongoing |

Even if your AI feature falls outside the high-risk category, skipping audit logging and explainability traces now means expensive retrofitting later. The role of QA in startups operating under EU regulation extends well beyond functional testing into compliance documentation. When validating SaaS ideas that involve AI, factoring in compliance testing costs from the start is not optional.

Pro Tip: If you are building a new AI feature and are unsure of its risk classification, treat it as high-risk during design. Integrating testing and documentation requirements from the beginning is far cheaper than a post-hoc compliance sprint.

Deep insights: common pitfalls and expert testing guidance for startup AI and MVP development

After covering compliance and core strategies, the patterns that actually kill startup quality deserve direct attention. These are not theoretical risks — they show up repeatedly in real product teams.

The most common testing mistakes:

- Skipping alpha tests with a small user cohort. Alpha testing misses 80% of critical bugs when skipped entirely. A five-user alpha session is not a nice-to-have.

- Not converting production incidents into tests. Every time your app breaks in production, that failure path is a test case waiting to be written. Not writing it means you will fix the same bug twice.

- Manual, untested CRM lead scoring. Startups without tested lead qualification experience inconsistent conversion rates and miss revenue because sales teams act on unreliable data.

- Measuring AI model quality with accuracy alone. NIST's generalized linear mixed models (GLMMs) reveal performance variance across subgroups that a single accuracy score hides entirely. An 89% accurate model can perform at 62% accuracy for a specific demographic segment.

That last point deserves more attention. Most AI MVPs ship with a headline accuracy metric and a bar chart. GLMMs are a statistical technique that examines how a model performs across different groups and conditions simultaneously. For any AI feature touching hiring, lending, or access decisions, using only aggregate accuracy metrics is not just technically weak — it creates regulatory exposure under the EU AI Act.

Pro Tip: Use GLMMs or equivalent variance analysis during AI MVP evaluation, not just at final validation. You want to catch demographic performance gaps during development, not during a regulatory audit.

The technical delivery failures that sink funded startups frequently trace back to these exact gaps: no alpha cohort, no regression coverage from incidents, and AI evaluation that looked good on paper but fell apart in production.

Comparing testing investment approaches: in-house, outsourced, and AI-automated models for startups

To finalize practical insights, the decision about how to staff and fund testing matters as much as what to test.

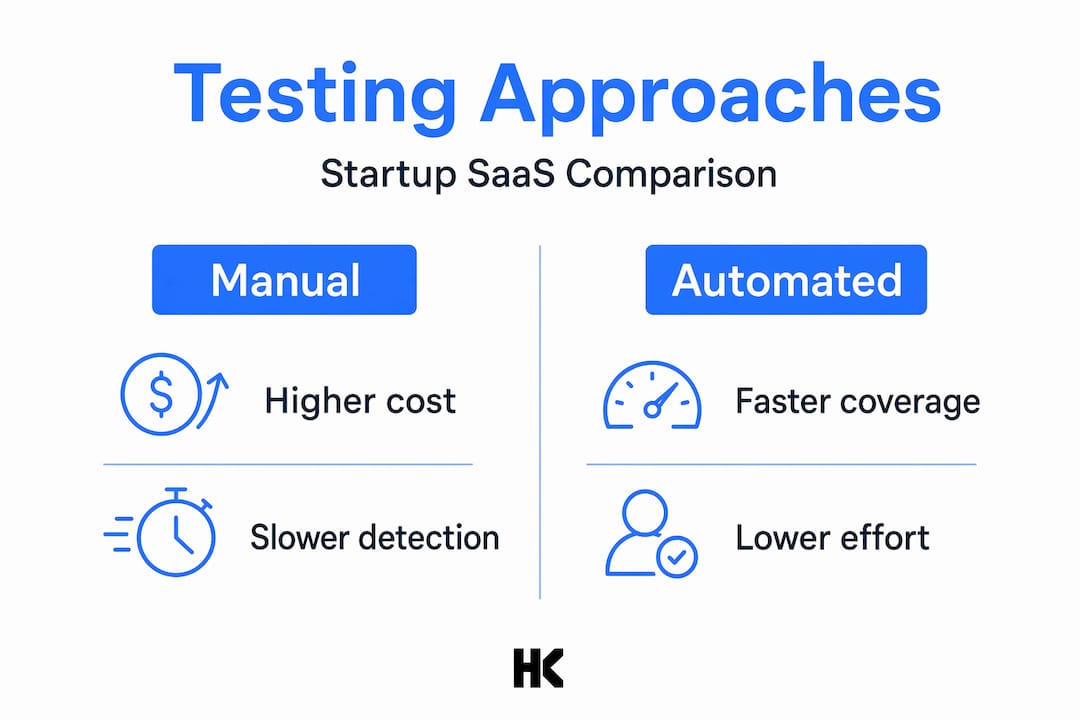

The three main models each have distinct tradeoffs:

- Full-time QA hire. Dedicated QA engineers cost $80,000 to $120,000 annually. For a seed-stage startup, that is a meaningful percentage of runway with uncertain ROI in the first six months.

- Outsourced testing. Outsourced QA provides flexible cost scaling that matches startup funding variability. You pay for the expertise you need when you need it, without the overhead of a full-time hire.

- AI-driven automated testing. The fastest path to positive ROI. Modern AI-powered test tools generate and maintain test cases with minimal manual input, providing continuous coverage between releases.

| Testing model | Annual cost (approx.) | Setup time | Maintenance load | Bug detection speed |

|---|---|---|---|---|

| Full-time QA hire | $80K–$120K | Immediate | Low | High |

| Outsourced QA | $15K–$50K | 1–2 weeks | Medium | Medium |

| AI-automated testing | $5K–$20K | 1–3 days | Low | High |

| Hybrid (auto + manual) | $10K–$40K | 1–2 weeks | Low | Highest |

Most startups benefit from a hybrid approach: AI-driven automation for critical path coverage combined with targeted manual testing for new feature releases. The testing investment models worth prioritizing are those that keep pace with your release cadence without requiring dedicated sprint time for test maintenance.

Why the smartest startups test early and automate wisely

Here is the part most testing articles will not say directly: the belief that testing slows you down is not just wrong, it is backwards. Every hour you skip testing at velocity is borrowed time you will pay back at a higher interest rate.

Startups that ship fast and sustainably do so because they have confidence in their codebase. That confidence comes from automated tests running silently in the background on every pull request. When a test fails, the engineer knows immediately. When tests pass, the deploy happens. There is no manual verification process, no "quick check before release," no 2am incident call.

Testing enables data-driven decisions, reduces risk through controlled pilots, and creates the foundation for continuous product improvement. That is not a philosophical point. It is what separates startups that can ship twice a week from those that are afraid to deploy on Fridays.

The counterintuitive insight here is about automation scope. The startups that waste time on testing are usually the ones who tried to automate everything at once. They built massive test suites that nobody maintained, and when the tests broke or slowed CI by 20 minutes, the team started skipping them. Strategic automation means covering your top 10 to 15 critical flows with tight, fast-running tests, and expanding coverage only when you have proven each test adds signal.

The importance of early testing shows up most clearly in AI feature development. An AI feature that ships without bias testing, evaluation logging, or defined performance thresholds is not done. It is a liability dressed as a product.

Quality-first culture does not mean slow culture. It means you respect the product enough to know what "done" actually means.

Pro Tip: Treat your test suite as an invisible safety net. It should run automatically, report failures loudly, and be completely silent when everything is fine. If your team thinks about tests as overhead, the suite is either too slow or covering the wrong things.

Explore tailored testing solutions for your startup with Hanad Kubat

Testing strategy, AI compliance architecture, and MVP validation are not areas where generic advice serves you well. The decisions you make in the first three months of a product determine how painful the next three years will be.

If you are building a B2B SaaS product in the DACH market and navigating AI features under EU AI Act obligations, I work directly with founders and CTOs to design testing approaches that fit your actual engineering capacity, not a textbook ideal. From EU AI Act compliance documentation to automated E2E test setup and MVP validation architecture, you work with the engineer doing the work. No account managers. No junior teams. Explore the engagement options at hanadkubat.com and see where a focused strategy sprint could save you months of rework.

Frequently asked questions

Why is testing particularly critical for startups developing AI features?

AI features require testing for accuracy, fairness, and robustness before they can be considered production-ready. High-risk AI systems under the EU AI Act also require documented bias and accuracy testing to avoid regulatory penalties.

How quickly can startups expect a return on investment from automated testing?

Most teams see positive ROI within 3 to 6 months. Automated testing reduces production bugs by 60 to 80% within the first year, which directly cuts incident response costs and churn.

What testing strategies suit startups with limited budgets and rapid release cycles?

Risk-based testing focused on high-impact paths, combined with targeted automation and shift-left practices, gives you the most coverage per hour spent. Shifting testing left and prioritizing high-risk areas over exhaustive coverage is the proven approach.

How does the EU AI Act impact testing documentation requirements for SaaS startups?

Startups with high-risk AI features must maintain detailed testing records including bias assessments for at least 10 years. Annex IV documentation covers test datasets, fairness evaluations, and performance thresholds with an August 2026 deadline.

What common pitfalls should startups avoid in their testing approach?

Skipping alpha testing, ignoring statistical variance in AI evaluation, and failing to automate regression cases from production incidents are the most damaging gaps. Alpha test omissions miss most critical bugs, and simple accuracy metrics routinely obscure dangerous performance gaps across user segments.