TL;DR:

- Technical validation is essential for EU-based SaaS AI products to meet regulatory standards and ensure real user effectiveness. It differs from verification by focusing on performance in real conditions, requiring systematic documentation and re-validation for changes. Emphasizing early, continuous validation practices helps founders navigate compliance and deliver trustworthy AI features.

If you are building AI features into your B2B SaaS product and operating in the EU, understanding what is technical validation is not optional. It is the difference between shipping something that passes your test suite and shipping something that survives a regulatory audit. Many founders conflate verification with validation and only discover the gap when they are already in production, staring down EU AI Act obligations they did not know applied to them. This article explains technical validation meaning, the standards that govern it, how to perform technical validation for AI features, and what DACH founders specifically need to do before 2026.

Table of Contents

- Understanding technical validation and verification

- Technical validation standards and requirements for SaaS and AI features

- Conducting technical validation: best practices for DACH SaaS founders

- Digital transformation and AI's role in evolving validation processes

- Why many SaaS founders misunderstand technical validation and what actually drives success

- How Hanad Kubat can help streamline your technical validation journey

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Technical validation defined | Technical validation confirms software meets user needs and regulatory requirements, distinct from verification that checks specs. |

| Standards matter | Microsoft and EU AI Act require specific technical validation steps, including automated checks and production-level testing. |

| Pre-validation is key | Testing on small datasets early uncovers issues and links changes to compliance, saving resources during audits. |

| Digital maturity enables AI | Digitizing validation records is essential for safe AI adoption and improves efficiency in regulated SaaS environments. |

| Avoid common pitfalls | Misunderstanding validation scope or substituting certifications can lead to compliance failures and product delays. |

Understanding technical validation and verification

These two terms get used interchangeably in most engineering teams. That is a mistake with real consequences.

Verification asks: did we build the software correctly according to the specification? It is about conformance. Static code analysis, unit tests, and code reviews are all verification activities. They confirm the artifact matches what was written down.

Technical validation asks a different question: does this software actually work for the users it was built for, under real conditions? Technical validation meaning shifts the lens from "is it built right" to "is it the right thing." The IEEE 829-2008 standard clarifies this separation formally, noting that applying both correctly catches 60 to 80% of defects early.

Why does this distinction matter for SaaS AI products? Because an AI feature can pass every unit test and still fail users in production. A recommendation engine may return syntactically correct outputs that are statistically biased. A document extraction model may compile cleanly but misclassify edge cases your enterprise clients actually submit. Verification would not catch either failure. Only technical validation would.

The practical implications of validating SaaS ideas and features before full deployment include:

- Catching behavioral gaps between expected and actual user outcomes

- Identifying edge cases that unit tests never simulate

- Building the evidence trail regulators require for AI systems

- Reducing the cost of fixing defects found in production versus pre-release

Skipping technical validation does not accelerate shipping. It defers the cost while making it much larger.

Technical validation standards and requirements for SaaS and AI features

Standards give you a concrete checklist instead of vague principles. Here are the ones that directly apply to B2B SaaS teams integrating AI in the EU.

Microsoft Business Central as a reference model

For SaaS products built on or integrated with Microsoft's ecosystem, automated validation checks are mandatory before any extension passes technical validation. The checklist includes extension compilation, static analysis, runtime compatibility testing, and data isolation checks. This is not a suggestion. Extensions that fail these checks do not ship. It is a useful model for any SaaS product: define a minimum automated validation gate that every release must clear.

EU AI Act validation obligations

This is where many founders get surprised. The EU AI Act requires validation on production-built units, not prototypes or staging environments. Any significant change to the AI system triggers full re-validation. Records must be retained for 10 years. That is not the same as your standard SOC2 audit cycle.

The following table compares static and dynamic validation checks in a compliance context:

| Check type | What it covers | Compliance relevance |

|---|---|---|

| Static analysis | Code structure, known vulnerability patterns | Baseline EU AI Act technical documentation |

| Dynamic behavioral testing | Outputs under real user inputs | Required for high-risk AI classification |

| Bias and fairness audits | Statistical distribution of model outputs | Mandatory for regulated data categories |

| Performance under load | Latency and accuracy at production scale | Operational validation requirement |

| Data provenance checks | Training and inference data lineage | GDPR and AI Act record-keeping |

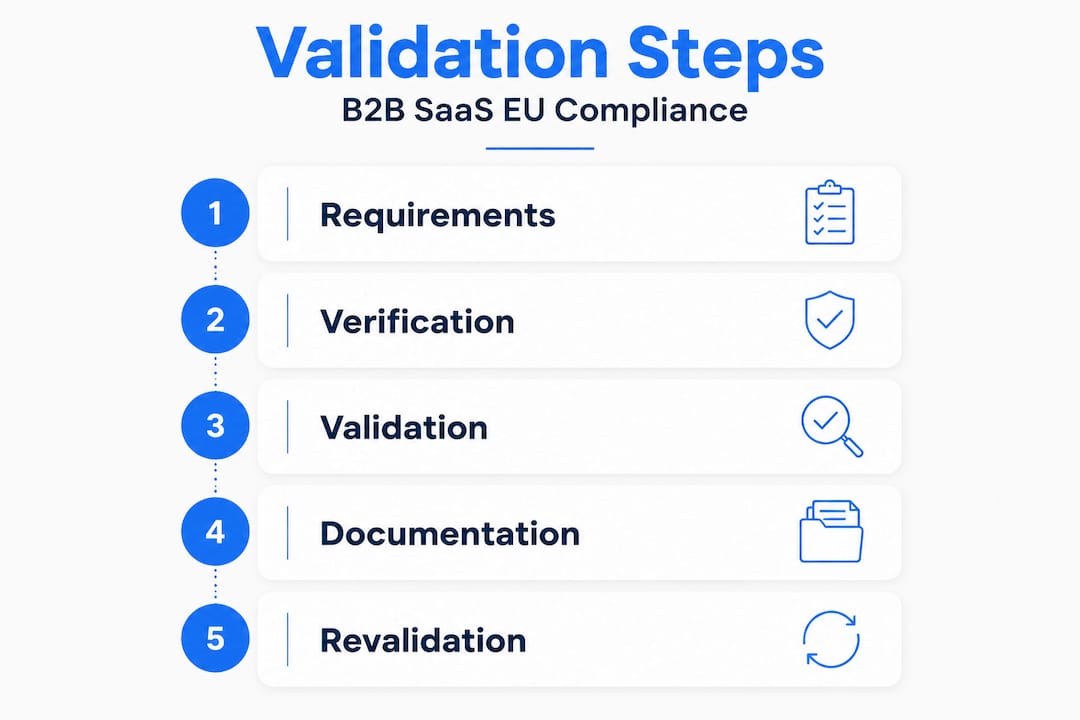

Key steps in technical validation for an AI SaaS feature include:

- Define the intended purpose and target user population before writing any test

- Establish technical validation criteria covering accuracy, latency, fairness, and failure modes

- Run validation on production-equivalent data, not sanitized samples

- Document all findings with version control tied to your model and data versions

- Trigger re-validation whenever the model, training data, or deployment environment changes

The MVP validation checklist for early-stage teams is a useful starting point for building this process before you hit compliance requirements at scale.

One misconception worth addressing: general software certification does not substitute for AI-specific technical validation. Passing a penetration test tells you nothing about whether your LLM-based feature produces outputs that comply with EU fairness requirements.

Conducting technical validation: best practices for DACH SaaS founders

DACH founders face a specific regulatory environment that makes the importance of technical validation more concrete than it is for teams operating outside the EU.

Start small, validate early

Pre-validation experiments on small datasets are a well-established way to catch methodology problems before you invest in a full validation run. This applies directly to AI: run your model against a representative 5% sample of production data before signing off on the full validation. Issues found at this stage cost hours to fix. Issues found during an audit cost weeks and sometimes legal fees.

Germany's 2026 AI Bill changes the landscape

DACH SaaS CTOs integrating AI must prepare for Germany's 2026 AI Bill, which establishes national authorities and regulatory sandboxes. Relying solely on SOC2 or ISO 27001 documentation for AI validation is a gap that German authorities will flag. You need AI-specific governance documentation that maps to the EU AI Act risk categories.

Practical best practices for technical consulting for SaaS validation engagements in this region include:

- Maintain a change log that explicitly links each system modification to its compliance impact

- Separate your AI governance documentation from your general information security docs

- Document bias assessment results for any model trained on user-generated data

- Assign a named owner for validation records, not just a team or department

- Build re-validation triggers into your deployment pipeline rather than treating them as a manual step

Pro Tip: When you define your technical validation criteria, include a business acceptance condition. Ask: what business outcome would cause us to reject this feature even if it passes all technical checks? That framing connects validation directly to the value you are shipping, not just the tests you are running.

The EU AI Act does not care whether you are a five-person startup or a 500-person Mittelstand company. If your AI feature touches a regulated use case, the obligations apply on day one of production.

Digital transformation and AI's role in evolving validation processes

The tools for technical validation are changing fast. The adoption of those tools is not keeping pace.

| Digitization level | Share of organizations |

|---|---|

| Fully digital validation records | 13% |

| Partially digital | 61% |

| Primarily paper-based | 26% |

Source: 2026 State of Validation Study. The same study found that 74% of organizations that have gone digital report ROI exceeding initial predictions. The gap between those two numbers is where most SaaS teams are sitting right now: aware that digital validation tooling pays off, but not yet committed to the transition.

For AI-specific products, digital validation platforms offer concrete benefits:

- Automated audit trails that satisfy EU AI Act record-keeping without manual effort

- Version-linked validation records that update when models or data change

- Real-time monitoring that flags drift between validated and current model behavior

- Faster re-validation cycles when you deploy incremental model updates

"Real AI validation spans technical, clinical, and operational layers. Governance is what makes adoption scale." Olga Lavinda, Health AI expert

That framing translates directly to B2B SaaS. Technical validation covers whether the AI feature works correctly. Operational validation covers whether it works correctly in the hands of your actual users, inside their workflows. You need both. Most teams only do the first.

The product validation guide covers operational and business validation layers in more detail, and it is worth reading alongside the technical standards to understand where they connect.

Why many SaaS founders misunderstand technical validation and what actually drives success

Here is the version of this topic that most articles skip.

The founders I see struggling with technical validation are not the ones who ignore it entirely. They are the ones who think they are doing it because their CI/CD pipeline is green. They have automated tests. They have a staging environment. They have a QA checklist. And then they get into a procurement conversation with a German enterprise client or a regulatory pre-check, and they discover their validation documentation covers verification only.

The EU AI Act surprises many by requiring production-level validation and 10-year record retention. Founders who discover this at the procurement stage are not facing a technical problem. They are facing a documentation and process problem that should have been solved six months earlier.

The second misunderstanding is about what passing tests actually means. A green test suite tells you the software does what you told it to do. It does not tell you that what you told it to do is right. That gap is where real technical validation lives. It is the gap between idea validation for SaaS founders and the point where you have evidence, not just confidence.

My practical advice: tie your validation outcomes to business acceptance criteria from the start. Define what "validated" means in terms a non-technical buyer can verify. That documentation serves both your internal quality process and the external audit trail regulators want to see. Change management is not a soft skill add-on. It is the mechanism that keeps your validation evidence coherent as your product evolves.

The teams that do technical validation well treat it as a continuous process, not a pre-launch gate. Every model update, every new training dataset, every change in deployment infrastructure is a validation event. Build for that from day one.

How Hanad Kubat can help streamline your technical validation journey

Technical validation for AI-integrated SaaS products is not a one-time checkbox. It is an ongoing engineering discipline, and the EU AI Act makes it a legal one too.

If you are a B2B SaaS founder or CTO in the DACH region working through AI feature integration, EU AI Act compliance, or both, Hanad Kubat offers direct, hands-on support. AI integration sprints ship production-ready features in two weeks, with EU-resident inference and GDPR-aware architecture built in from the start. AI audits produce a prioritized compliance and technical roadmap in days, not quarters. Every engagement is with the engineer writing the code, not a project manager relaying messages. Pricing is fixed, timelines are real, and the output is audit-ready documentation alongside working software.

Frequently asked questions

What is the difference between technical validation and verification in software?

Verification confirms the software is built correctly against its specification, while technical validation confirms it solves the right problem for real users under actual conditions.

Why is technical validation critical for AI features in SaaS products in the EU?

Because the EU AI Act requires validation on production-built units with full re-validation on changes and 10-year record retention, making it a legal obligation rather than just a quality practice.

How can SaaS founders prepare for technical validation under Germany's 2026 AI Bill?

They should implement AI-specific governance documentation, avoid relying solely on general certifications like SOC2 for AI validation, and engage early with national sandbox authorities.

What are best practices to reduce validation workload and improve compliance?

Run small dataset pre-validation experiments to catch issues before full runs, maintain change logs tied to compliance impact, and gradually digitize validation records to build a continuous audit trail.